| Fri. May 15, 2026 |

|

|

||

|

||||

| ||||

Introduction

Artificial Intelligence (AI) is complicating politics in ways not seen since the invention of the internet.[1] On the one hand, AI is seen as a silver bullet for authoritarian rulers to make repression cheaper, faster, and more decisive.[2] On the other hand, AI is also seen as a liberalizing force that can galvanize activist mobilization in new and powerful ways.[3] In Southeast Asia, this dilemma is particularly salient, as AI adoption stands at a crucial inflection point. In 2024, AI-related investments surged to US$55.2 billion across the region, with a projected compound annual growth rate of 25 percent between 2023 and 2028.[4][5] Driven by a tenfold surge in AI use, data centers have begun to mushroom to the effect that existing operational capacity is expected to triple by 2030.[6] Yet the region’s diverse regime types suggest that AI will neither be developed nor deployed uniformly. The implications of regime heterogeneity will have a sizable impact on where the region is headed in terms of AI development as a whole.

Domestic Regime Type and AI Policy Formation

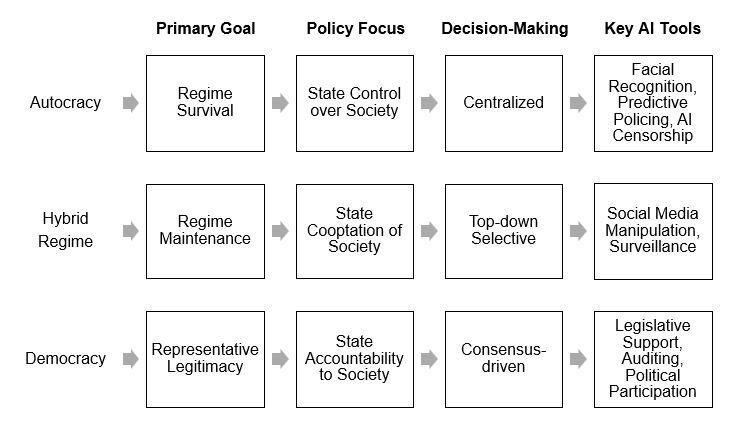

Ideal-type Model of AI Policy Formation by Regime Type. Author’s own diagram.

Different political regimes form AI policies differently. As opposed to democratic regimes, policy decisions in autocracies are made by a small circle of decision-makers.[7] This means that the ruler’s policy preferences dominate in the absence of electoral channels for public demands. Limited government turnover also reduces the likelihood of major policy shifts over time.[8] In the absence of representative institutions, closed autocracies rely on performance legitimacy rather than democratic accountability to remain in power.[9][10] Accordingly, digital technologies are adopted primarily to strengthen regime survival.[11][12][13][14] In modern autocracies, AI is used to automate censorship processes or to arrest dissent before they emerge. Powerful filters discourage the spread of unfavorable information while AI bots saturate information channels with pro-regime narratives.[15] AI-powered facial recognition enables the selective prosecution of dissidents.[16] Even more insidiously, AI can be used to hack into online accounts to carry out personalized forms of repression. With scant oversight, such actions are often carried out with impunity.

Hybrid regimes, which combine the features of democracy and authoritarianism, reveal how AI policy formation is accentuated by contradictions. Although they retain nominally democratic institutions such as legislatures, elections and constitutions, these are used to entrench authoritarian rule.[17][18] Power-sharing arrangements help divide spoils between dictators and elites. Indeed, these spoils “may be monetary… [or] a compromise over personnel appointments or policy direction”.[19] Autocrats also use these institutions to gauge public support, enabling a limited form of responsiveness that exceeds that of closed autocracies but remains more centralized and less deliberative than in democracies.[20][21] In a semi-accountable political context, hybrid rulers prefer to use AI in less systemic and more covert ways to reinforce incumbency and distort public perception of electoral competition.[22] Their preference is to manipulate the flow of information rather than impose a universal form of censorship.[23] In this way, the regime can maintain an “open façade” for the legitimacy that it gives, but not so much as to fully democratize and risk overthrow by popular forces.

Conversely, AI policy formation in democracies follows a different logic due to the distinct relationship between citizens, institutions, and governments. Notably, policy formation in democracies is driven by societal demands for government solutions and political competition over the supply of policies as a response to these demands. Politicians and parties are compelled to understand what voters want and respond to them because their careers depend on it.[24][25] Unlike autocracies, democracies impose ethical and legal constraints that limit state overreach and protect individual rights.[26] AI technology is freely accessible to the public in most democracies rather than restricted as they are in some autocracies.[27] Increasingly, legislators are using AI to draft technical legislation to sidestep the influence of lobbyists.[28] AI is also being deployed to lower the barriers of entry for local candidates running for public office.[29] The presence of a civil society further generates the diagonal accountability needed to prevent the state’s concentration of power over AI governance.[30]

Vietnam and AI Policy Formation in a One-Party Autocracy

In Southeast Asia, Vietnam’s one-party regime closely illustrates how authoritarian politics interact with bureaucratic politics and interest group dynamics. Briefly, the ruling Communist Party (CPV) exercises a monopoly of political power through a Leninist regime which has permitted a style of policy formation driven both by ideological convictions and pragmatic policymaking. Digital policies, in this context, are funneled through an institutional structure that reflects the developmental logic of the state, the CPV’s elite politics, and other sundry vectors of regime legitimacy.[31] Despite factional tensions within the CPV, strong elite buy-in for digital transformation has traditionally ensured a cohesive transition strategy.[32] Nevertheless, the “black box” nature of the party-state system means that the legitimacy of AI adoption is constrained by various ethical hazards, transparency deficits and accountability gaps.[33]

To Lam, the current party and state leader, considers AI adoption a “matter of survival” for Vietnam.[34] Mirroring this urgency, the National Assembly promulgated a comprehensive law on regulating AI – the first of its kind in the region.[35] But as a one-party state, the regime has limited public accountability mechanisms to credibly prevent abuse of power. For one, Vietnam is judged to have weak rule of law and a “closed” civic space. [36][37] The CPV maintains a hegemonic role in the media environment down to the editorial levels.[38] In 2023, the government went so far as to compel Facebook to use AI to track and remove content deemed subversive to the state.[39] It has also used various other digital means to “sanitize its image online”, including through censorship and manipulation of public opinion.[40]

It is in this context that the CPV is expected to pursue AI modernization with regime maintenance in mind. It has in place a Chinese-style Cybersecurity Law which emphasizes data localization and information control.[41] Another backbone of this project is the construction of an extensive physical surveillance network, where as many as 670,000 Hikvision cameras were reportedly being installed throughout the country.[42] As in China, AI-driven monitoring systems are being installed in high-tech locales known as “Smart Cities” where an increasing number of citizens reside.[43] Conversely, the introduction of chip-based identification cards for financial transactions has also been suspected of being a means to “monitor and control its populace in the name of public safety and social security”.[44] There is also the question of how AI may complement the CPV’s Force 47 – its 10,000-strong military cybertrooper unit. A report published by the University of Oxford reveals that Vietnam has “high cyber trooper capacity” involving large numbers of staff, high budgetary expenditures, and voluminous funds for research and development. It will be unsurprising to see, then, how the Force 47 may be outfitted with new AI capabilities to carry out its duties more effectively.[45]

Naturally, these policies and the secrecy which surround their formation have led to considerable public skepticism.[46] On this point, the government has responded with adaptive policy accountability rather than outright repression. For instance, the backlash which fell upon Hanoi’s hasty implementation of facial recognition systems prompted the creation of a limited data disclosure policy in 2022, which eventually culminated in the Personal Data Protection Law (PDPL) in 2025.[47] When a similar fallout over AI contact tracing arose during the COVID-19 pandemic, the government responded in a similar way by providing modular options to “opt-out”.[48] Indeed, executive accountability appears to have allowed the CPV to skirt popular demands for participatory politics. At the same time, the lack of public consultation is also the reason why AI ethical standards are comparatively lower in autocracies than democracies.[49] In this connection, the Vietnamese party-state is unique among other authoritarian regimes in Asia, namely China and Cambodia, in that the regime prefers programmatic over reactive marginal reforms.[50] This predilection encourages AI policy formation to be responsive, though still far from the participatory standards of Western democracies.[51][52] Where AI development has been opened to participants “outside” of the regime, the state has largely entrusted it to a motley crew of elite corporations such as Vingroup, Viettel, and FPT Corporation.[53] Ultimately, technocratic leanings and powerful feedback loops reinforce the regime’s claim to performance legitimacy, which further enhances the regime’s stability in the midst of its digital transformation.

Singapore and AI Policy Formation in a Hybrid Regime

In Singapore, the ruling People’s Action Party (PAP) has been in power since 1959. As a hybrid regime, Singapore has many of the procedural features of advanced industrial democracies, namely a Westminster-style parliamentary system that was inherited from British colonial rule. But certain features of the regime, such as its electoral laws, restrictions on civil liberties and preservation of repressive legislation, serve to keep the political system firmly authoritarian.[54][55] In matters of policy formation, the PAP enjoys full control over the political agenda as well as the formulation of policy alternatives.[56] It has permitted little room for independent political advocacy, and in the rare instances where some autonomy is given, they are granted only to organizations administratively linked to the government. It is in this context that policy accumulation is significantly lower in Singapore than in advanced democracies at similar developmental levels.[57]

Here, national policies are fabricated through a technocratic regime that balances innovation with ethical regulations but limits public consultation.[58] For decades, the Infocomm Media Development Authority (IMDA) – alongside its institutional predecessors - has functioned as the main regulatory and advisory body for information technology and internet governance.[59] The IMDA Act endows the agency with the legal power to “regulate the telecommunications system and services” and “ensure that content is not against the public interest, public order or national harmony”.[60] In this way, the government possesses a virtual freehand over the instruments of digital control.[61][62] But unlike regional autocracies, the PAP has maintained a “lighter” approach to regulating the internet.[63] Consistent with hybrid regimes elsewhere, the PAP prefers to use existing laws to co-opt digital strategies rather than to innovate entirely new ways to control the information environment.[64] Unlike closed autocracies, it has avoided totalitarian repression of the media altogether.

In 2019, the government launched the National AI Strategy (NAIS) to coordinate the city-state’s AI policies.[65] The strategic document aims to cultivate AI capabilities in key sectors like healthcare, manufacturing, and cybersecurity.[66] The Model AI Governance Framework, released in the same year, forms the guiding principle for the NAIS’s ethics and governance. While it is similar in many ways to the United States Blueprint for an AI Bill of Rights, it is noticeably quiet on the use of AI for surveillance (ibid). Yet, in contrast to other autocracies like China, Singapore maintains much more rigorous AI regulatory standards.[67] For one, the IMDA has committed itself to the principle of “human-centricity”[68] and has made several breakthroughs in AI governance. For instance, the Model AI Governance Framework for Agentic AI, launched in January 2026, is the first of its kind in the world for regulating AI agents capable of “autonomous planning, reasoning, and action”.[69][70]

But as Dan Slater argues, party-based authoritarian regimes are durable because ruling parties coordinate the execution of decisions and not necessarily their collective formulation.[71] The highly centralized organization of the NAIS is problematic for this reason. As it stands, the program is parked under the Smart Nation Group (SNG), alongside its implementation arm, the Government Technology Agency (GovTech), which is a statutory body placed under the powerful Prime Minister’s Office (PMO). While formally placed in the PMO, this apparatus is administratively managed by the Ministry of Digital Development and Information (MDDI). This steering structure, distinguished by the dominance of the ruling party and elite technocratic groups, centralizes authority on a body that has in the past acted in ways contrary to public expectations.[72][73][74]

In keeping with its state-centric technocratic style, the PAP has avoided opening fuller avenues for public participation, instead preferring to channel deliberation through elite advisory bodies, namely the AI Ethics Advisory Council, Committee of the Future Economy, and the newly established National AI Council.[75] In this way, the government can co-opt public opinion while the SNG directs national AI policy in a fashion similar to how industrial policies were directed under the “pilot agencies” of the classical East Asian developmental states.[76] Where public backlash is concerned, the PAP has responded with legal remedies, but usually only after the fact.[77] This is an issue which would likely not arise if the policy process was fully transparent in the first place. The European Center for Not-for-Profit Law (ECNL), for instance, has explicit guidelines on how to democratize AI policy engagement to maximize public participation in the European Union.[78] This could serve as a model for policy openness. Absent such reforms, however, the regime will continue to carefully manage consultation, but avoid harsh repression, as the electoral costs of doing so would be considerable.

Malaysia and AI Policy Formation in a New Electoral Democracy

Malaysia is rated as a “partly free” country that has just narrowly made the mark to qualify as an electoral democracy.[79][80] From 1957, Malaysia was governed as a single-party dominant regime under the Barisan Nasional (BN) until it was ousted by an opposition coalition in 2018.[81] Under the BN regime, policy formation was “centralized and cloaked in secrecy”, emanating almost exclusively from the Prime Minister’s Department (PMD).[82] While the country no longer has a single party dominating politics, the current Pakatan Harapan (PH) government continues to retain many of the authoritarian institutions of the former regime, including its suite of repressive laws, government-linked companies (GLCs), and state regulatory machines (ibid). Owing to the country’s divided and ranked social structure, consensual politics emerge as policies must account for ethnic and class interests.[83] In this respect, policymaking is a highly centralized process with some opportunities for consensus building among interest groups.

However, the electoral democracy in which Malaysia now finds itself has transformed this process fundamentally. For one, elections are now more meaningful and less predictable than before. Functionally, this constrains the power of individual politicians and parties to act unaccountably while improving the articulation of citizen preferences and goals into public policies. Conversely, the political openness which now prevails has reinvigorated civil society and liberalized political norms, though unevenly between the two.[84] Yet, it is in this context that one might expect the government to be constrained by two new vectors: competitive elections and a robust civil society. Some of these limitations are reflected in its AI policy formation process. Launched in December 2024, the National AI Office (NAIO) is the central authority coordinating the country’s AI policies. The NAIO is seeded under MyDIGITAL Corporation, an agency under the Ministry of Digital. Notably, the Ministry is tasked with cross-sectoral AI development, requiring it to coordinate governance responsibilities across various ministries, higher education institutions, special-purpose vehicles, and national investment boards.[85] In this respect, the NAIO follows a pilot agency model similar to Singapore’s SNG.

At the same time, the broad latitude granted to government ministries carries inherent risks. For instance, legal jurisdiction over the internet falls under the Malaysian Communications and Multimedia Commission (MCMC), housed within the Ministry of Communications. This ministry wields considerable authority through the enforcement of the Communications and Multimedia Act (CMA) 1998 - a potent legislative tool frequently criticized for destabilizing democratic politics and stifling dissent.[86] Yet, the uncertainty inherent in electoral competition can force would-be autocrats to hesitate before weaponizing AI policies for autocratic ends. For instance, the prime minister’s call for AI technologies to be equipped with “Islamic values” was met with noticeable backlash from netizens, particularly from the country’s large ethnic minority groups.[87] While such opposition can readily be censored in a closed autocracy or shelved away in an electoral autocracy, suppressing it in a competitive democracy carries much higher political risks. Further, when component parties of the ruling coalition suffered a crushing defeat in the 2025 Sabah state elections, a chorus of government politicians, fearing nationwide backlash, called for renewed efforts to accelerate campaign pledges and revitalize voter support over issuing aggressive, top-down policy mandates.[88]

The qualitative character of the regime has indeed changed, and government responsiveness along with greater civil society engagement seems to have taken precedence over technocratic authoritarianism. Notably, the NAIO adopts a “quadruple helix collaboration model” that is unique in the region for bringing together government, academia, industry and civil society forces in designing AI governance.[89] More exceptionally, the NAIO has facilitated interfaith dialogues by which to encourage participation from the country’s mosaic of communities.[90] The pertinent challenge now lies in equalizing AI access in order to prevent a situation where a strong state becomes stronger or even autocratic because of AI or a weak state becomes even weaker from threat actor or civil society actions.[91] Under a consolidating democracy with emerging governance gaps like Malaysia,[92] one might expect that a frontier technology as powerful as AI may fall into the wrong hands and lead the country down a path of no return.

Conclusion

In Southeast Asia, AI policy formation is decisively shaped by regime type, producing both shared priorities and distinct political outcomes. Across Vietnam, Singapore, and Malaysia, governments converge in recognizing AI as a strategic and developmental imperative and in centralizing coordination through pilot agencies and technocratic steering structures. Yet, the purposes, constraints, and accountability mechanisms governing AI diverge markedly.

In Vietnam’s one-party autocracy, AI is primarily embedded within a logic of regime survival, enabling surveillance, information control, and performance legitimacy with limited public accountability. Singapore’s hybrid regime illustrates a middle path in which technocratic governance and relatively robust regulatory standards coexist with centralized control and constrained public participation. Malaysia’s emerging electoral democracy, by contrast, exhibits the strongest pressures for responsiveness and plural participation, even as authoritarian institutional legacies continue to create risks of misuse.

Taken together, the comparison underscores a central finding: while AI adoption is universal across regime types, its political trajectory is not. Instead, AI amplifies existing institutional logics - reinforcing control in autocracies, enabling calibrated governance in hybrid regimes, and expanding inclusiveness in electoral democracies. Consequently, a region-wide AI standard will be elusive.

Foo Siew Jack is Research Associate at HELP University, where he is currently working on a book about federal-state relations in Malaysia. His written works have been featured in the East Asia Forum, Eurasia Review, Daily Sun Bangladesh and Stratsea. He was most recently cited in a report produced by the East Asia Institute in South Korea. He holds an MA in International Relations from the University of Nottingham and a BASocSc in Global Studies from Monash Un

[1] United Nations. “The Impact of Digital Technologies.” United Nations. Accessed April 25, 2026. https://www.un.org/en/un75/impact-digital-technologies.

[2] Anastasopoulos, L. Jason, and Jie Lian. “The Limits of Authoritarian Ai.” Journal of Democracy, April 2026. https://www.journalofdemocracy.org/articles/the-limits-of-authoritarian-ai/.

[3] Cupac, Jelena, Hendrik Schopmans, and Irem Tuncer-Ebetürk. “Democratization in the Age of Artificial Intelligence: Introduction to the Special Issue.” Democratization 31, no. 5 (July 3, 2024): 899–921. https://doi.org/10.1080/13510347.2024.2338852.

[4] Sufianti, Sufianti. “Ai, Digital Investments May Be next Leg of SE Asia’s FDI Growth.” Bloomberg Professional Services, December 9, 2024. https://www.bloomberg.com/professional/insights/regional-analysis/ai-digital-investments-may-be-next-leg-of-se-asias-fdi-growth/.

[5] Hollis, Cheyenne. “AI Investment in Asia Heats Up as More Companies Embrace Innovation.” Asian Insiders, February 5, 2026. https://asianinsiders.com/2025/03/18/2025-ai-investment-asia/.

[6] The Straits Times. “South-East Asia’s Data Centre Boom for Ai.” The Straits Times, February 26, 2026. https://www.straitstimes.com/asia/se-asia/where-artificial-intelligence-lives-south-east-asias-data-centre-boom.

[7] Martus, Ellen. 2017. “Contested Policymaking in Russia: Industry, Environment, and the ‘Best Available Technology’ Debate.” Post-Soviet Affairs 33(4): 276–97.

[8] Wright, Joseph, and Bak, Daehee. 2016. “Measuring Autocratic Regime Stability.” Research & Politics 3(1). http://journals.sagepub.com/doi/10.1177/2053168015626606.

[9] Chang, Alex, Chu, Yun-han, and Welsh, Bridget. 2013. “Southeast Asia: Sources of Regime Support.” Journal of Democracy 24(2): 150–64.

[10] Fukuyama, Francis, Chris Dann, and Beatriz Magaloni. “Delivering for Democracy: Why Results Matter.” Journal of Democracy 36, no. 2 (April 2025): 5–19. https://doi.org/10.1353/jod.2025.a954557.

[11] Dekeyser, Thomas, Casey R. Lynch, and Sophia Maalsen. “AI Authoritarianism: Towards an Analytical Framework.” Transactions of the Institute of British Geographers, November 23, 2025. https://doi.org/10.1111/tran.70048.

[12] Ding, J., and P. Triolo. 2021. “Lofty Principles, Conflicting Incentives: AI Ethics and Governance in China.” Mercator Institute for China Studies (MERICS). https://merics.org/en/report/lofty-principles-conflicting-incentives-ai-ethics-and-governance-china

[13] Zeng, J. 2020. “The Chinese Approach to Artificial Intelligence: An Analysis of Policy, Ethics, and Regulation.” AI & Society 35 (1): 59–77. https://doi.org/10.1007/s00146-019-00902-2.

[14] Polyakova, A. and Meserole, C. 2019. Exporting Digital Authoritarianism: The Russian and Chinese Models, Brookings Institution. United States of America. Retrieved from https://coilink.org/20.500.12592/rwbp8q

[15] Frantz, Erica, Andrea Kendall-Taylor, and Joseph Wright. “Digital Repression in Autocracies.” Varieties of Democracy, March 2020. https://www.v-dem.net/media/publications/digital-repression17mar.pdf.

[16] Xu, Xu. “To Repress or to Co-opt? Authoritarian Control in the Age of Digital Surveillance.” American Journal of Political Science 65, no. 2 (April 7, 2020): 309–25. https://doi.org/10.1111/ajps.12514.

[17] Schedler, Andreas. “Electoral Authoritarianism.” Emerging Trends in the Social and Behavioral Sciences, May 15, 2015, 1–16. https://doi.org/10.1002/9781118900772.etrds0098.

[18] Gandhi, Jennifer, and Adam Przeworski. “Authoritarian Institutions and the Survival of Autocrats.” Comparative Political Studies 40, no. 11 (November 2007): 1279–1301. https://doi.org/10.1177/0010414007305817.

[19] Svolik, Milan W. The Politics of Authoritarian Rule. Cambridge: Cambridge University Press, 2013.

[20] Gandhi, Jennifer. Political Institutions under Dictatorship. Cambridge: Cambridge University Press, 2010.

[21] Williamson, Scott, and Beatriz Magaloni. “Legislatures and Policy Making in Authoritarian Regimes.” Comparative Political Studies 53, no. 9 (March 29, 2020): 1525–43. https://doi.org/10.1177/0010414020912288.

[22] Yardimci-Geyikci, Sebnem. “Ai, Conditioned Uncertainty, and the Functions of Elections in Hybrid Regimes.” European Consortium for Political Research. Accessed April 25, 2026. https://ecpr.eu/Events/Event/PaperDetails/91063.

[23] Wright, Nicholas D. “Artificial Intelligence and Domestic Political Regimes: Digital Authoritarian, Digital Hybrid, and Digital Democracy.” Jstor, October 1, 2019. https://www.jstor.org/stable/resrep19585.9.

[24] Hobolt, Sara Binzer, and Klemmensen, Robert. 2008. “Government Responsiveness and Political Competition in Comparative Perspective.” Comparative Political Studies 41(3): 309–37.

[25] Bingham Powell, G. “The Quality of Democracy: The Chain of Responsiveness.” Journal of Democracy, October 2004. https://www.journalofdemocracy.org/articles/the-quality-of-democracy-the-chain-of-responsiveness/.

[26] Wright, Nicholas D. Artificial Intelligence and Democratic norms, August 2020. https://www.ned.org/wp-content/uploads/2020/07/Artificial-Intelligence-Democratic-Norms-Meeting-Authoritarian-Challenge-Wright.pdf.

[27] Davidson, Helen. “‘Political Propaganda’: China Clamps down on Access to ChatGPT.” The Guardian, February 23, 2023. https://www.theguardian.com/technology/2023/feb/23/china-chatgpt-clamp-down-propaganda#:~:text=ChatGPT, an artificial intelligence chat,cut access to the programs.

[28] Schneier, Bruce. “Ai Will Write Complex Laws.” Harvard Kennedy School, April 15, 2026. https://www.hks.harvard.edu/centers/mrcbg/programs/growthpolicy/ai-will-write-complex-laws.

[29] Savaget, Paulo, Tulio Chiarini, and Steve Evans. “Empowering Political Participation Through Artificial Intelligence.” Science and Public Policy 46, no. 3 (November 5, 2018): 369–80. https://doi.org/10.1093/scipol/scy064.

[30] Callirgos, Paola Galvez. “Pathways to Inclusion: Advancing Civil Society’s Role in AI Governance.” Globethics, November 21, 2025. https://globethics.net/publications/pathways-inclusion-advancing-civil-societys-role-ai-governance.

[31] Nguyen, Tien-Duc, and Linh-Giang Nguyen. “Responsive Centralism: The Political and Regulatory Landscape of Vietnam’s Digital Transformation.” Project MUSE , December 2025. https://muse.jhu.edu/pub/70/article/978595/pdf.

[32] Ministry of Science and Technology. “Chính Sách C?a Nhà Nu?c V? AI: C?n Nh?ng Ð?nh Hu?ng Tr?ng Tâm, Mang Tính Quy?t Ð?nh.” B? KHOA H?C VÀ CÔNG NGH?, December 9, 2025. https://mst.gov.vn/chinh-sach-cua-nha-nuoc-ve-ai-can-nhung-dinh-huong-trong-tam-mang-tinh-quyet-dinh-197251206121442969.htm. See also Foo, Siew Jack. “Transition to Personalist Rule in Vietnam?” Stratsea, April 17, 2026. https://stratsea.com/transition-to-personalist-rule-in-vietnam/.

[33] Tran, Huong T., Bac H. Dang, Mai T. Nguyen, Quynh T. Pham, and Phuoc V. Nguyen. “Artificial Intelligence Ethics in Authoritarian Vietnam: Governance, Trust, and Societal Tensions.” Policy Design and Practice 8, no. 4 (July 13, 2025): 427–41. https://doi.org/10.1080/25741292.2025.2529625.

[34] Pham, Linh. “Stand Firm and Self-Reliant - Matter of Survival for Vietnam in AI Era.” Hanoitimes, October 29, 2025. https://hanoitimes.vn/stand-firm-and-self-reliant-matter-of-survival-for-vietnam-in-ai-era.890821.html.

[35] The Straits Times. “Vietnam AI Law Takes Effect, First in South-East Asia.” The Straits Times, March 1, 2026. https://www.straitstimes.com/asia/se-asia/vietnam-ai-law-takes-effect-first-in-south-east-asia.

[36] Tan, Jun-E, Kathleen Azali, and Katerina Francisco. “Governance of Artificial Intelligence (AI) in Southeast Asia.” EngageMedia, December 2021. https://engagemedia.org/wp-content/uploads/2021/12/Engage_Report-Governance-of-Artificial-Intelligence-AI-in-Southeast-Asia_12202021.pdf.

[37] “Rule of Law Index.” World Justice Project, 2025. https://worldjusticeproject.org/rule-of-law-index/country/Vietnam.

[38] Haenig, Martin Albrecht, and Xianbai Ji. “A Tale of Two Southeast Asian States: Media Governance and Authoritarian Regimes in Singapore and Vietnam.” Asian Review of Political Economy 3, no. 1 (March 22, 2024): 1–23. https://doi.org/10.1007/s44216-024-00024-6.

[39] Strangio, Sebastian. “Vietnam Calls for Tech Giants to Use AI to Remove ‘Anti-State’ Content.” The Diplomat, July 3, 2023. https://thediplomat.com/2023/07/vietnam-calls-for-tech-giants-to-use-ai-to-remove-anti-state-content/.

[40] Luong, Dien Nguyen An. “2023/60 ‘Flexing Censorship Muscles and Leveraging Public Sentiments: How the Vietnamese State Scrambles to Sanitise Its Image Online’ by Dien Nguyen an Luong.” ISEAS Yusof Ishak Institute, July 26, 2023. https://www.iseas.edu.sg/articles-commentaries/iseas-perspective/2023-60-flexing-censorship-muscles-and-leveraging-public-sentiments-how-the-vietnamese-state-scrambles-to-sanitise-its-image-online-by-dien-nguyen-an-luong/.

[41] Caster, Michael. “Vietnam: Confronting Digital Dictatorship.” Article 19, September 12, 2023. https://www.article19.org/resources/vietnam-confronting-digital-dictatorship/.

[42] Gibson, Liam. “Hikvision Sanctions Signal Uncharted Waters from UK to Vietnam.” Al Jazeera, May 18, 2022. https://www.aljazeera.com/economy/2022/5/17/from-vietnam-to-uk-hikvision-sanctions-signal-uncharted-waters.

[43] Tran, Huong T., Bac H. Dang, Mai T. Nguyen, Quynh T. Pham, and Phuoc V. Nguyen. “Artificial Intelligence Ethics in Authoritarian Vietnam: Governance, Trust, and Societal Tensions.” Policy Design and Practice 8, no. 4 (July 13, 2025): 427–41. https://doi.org/10.1080/25741292.2025.2529625.

[44] Tan, Netina, and Yuko Kasuya. Routledge Handbook of Autocratization in Southeast Asia 1 (July 24, 2025). https://doi.org/10.4324/9781003410904.

[45] Luong, Dien Nguyen An. “How the Vietnamese State Uses Cyber Troops to Shape Online Discourse.” ISEAS Yusof Ishak Institute, 2021. https://www.iseas.edu.sg/articles-commentaries/iseas-perspective/2021-22-how-the-vietnamese-state-uses-cyber-troops-to-shape-online-discourse-by-dien-nguyen-an-luong/.

[46] Tran, Huong T., Bac H. Dang, Mai T. Nguyen, Quynh T. Pham, and Phuoc V. Nguyen. “Artificial Intelligence Ethics in Authoritarian Vietnam: Governance, Trust, and Societal Tensions.” Policy Design and Practice 8, no. 4 (July 13, 2025): 427–41. https://doi.org/10.1080/25741292.2025.2529625.

[47] Tomonobu, Murata, Tuan Anh Nguyen, Thi Thanh Ngoc Nguyen, Boonyasith Chanakarn, Whangruammit Pitchabsorn, and Ruangwuttitikul Natrada. “Thailand V. Vietnam’s Personal Data Protection Law: What Are the Notable Differences?” Nishimura & Asahi, August 17, 2023. https://www.nishimura.com/sites/default/files/newsletters/file/asia_data_protection_230817_en.pdf. See also DLA Piper. “Data Protection in Vietnam.” Data Protection Laws of the World, February 15, 2026. https://www.dlapiperdataprotection.com/?t=law&c=VN.

[48] Tran, Huong T., Bac H. Dang, Mai T. Nguyen, Quynh T. Pham, and Phuoc V. Nguyen. “Artificial Intelligence Ethics in Authoritarian Vietnam: Governance, Trust, and Societal Tensions.” Policy Design and Practice 8, no. 4 (July 13, 2025): 427–41. https://doi.org/10.1080/25741292.2025.2529625.

[49] Huang, Linus Ta-Lun, Gleb Papyshev, and James K. Wong. “Democratizing Value Alignment: From Authoritarian to Democratic AI Ethics.” AI and Ethics 5, no. 1 (December 2, 2024): 11–18. https://doi.org/10.1007/s43681-024-00624-1.

[50] Truong, Nhu. “Authoritarian Expropriation: Land Seizures and Regime ...” Center for Khmer Studies, December 8, 2023. https://khmerstudies.org/authoritarian-expropriation-land-seizures-and-regime-responsiveness-under-communist-vietnam/.

[51] “The EU Artificial Intelligence Act.” EU Artificial Intelligence Act. Accessed April 25, 2026. https://artificialintelligenceact.eu/.

[52] European Commission. Commission launches consultation to develop guidelines and code of practice on transparent AI systems, September 4, 2025. https://digital-strategy.ec.europa.eu/en/news/commission-launches-consultation-develop-guidelines-and-code-practice-transparent-ai-systems#:~:text=The AI Act obliges deployers,the AI Act transparency provisions.

[53] Khuong, Vu Minh. “Vietnam’s AI Strategy: Aligning Aspirations with Adaptive Governance.” Tech For Good Institute, February 24, 2026. https://techforgoodinstitute.org/insights/country-spotlights/vietnams-ai-strategy-aligning-aspirations-with-adaptive-governance/#:~:text=Vietnam’s private sector is actively,Meta, Intel, and Samsung.

[54] Ortmann, Stephan. “Singapore: Authoritarian but Newly Competitive.” Journal of Democracy, October 2011. https://www.journalofdemocracy.org/articles/singapore-authoritarian-but-newly-competitive/.

[55] Freedom House. Singapore: Country Profile, February 29, 2024. https://freedomhouse.org/country/singapore.

[56] Ortmann, Stephan. “Policy Advocacy in a Competitive Authoritarian Regime: The Growth of Civil Society and Agenda Setting in Singapore.” Administration & Society 44, no. 6_suppl (September 2012). https://doi.org/10.1177/0095399712460080.

[57] Aschenbrenner, Christian, Christoph Knill, and Yves Steinebach. “Autocracies and Policy Accumulation: The Case of Singapore.” Journal of Public Policy 43, no. 4 (July 4, 2023): 637–58. https://doi.org/10.1017/s0143814x2300017x.

[58] Chua, E., and J. Ng. “AI Governance in Singapore: Balancing Innovation and Ethical Oversight.” Journal of Technology Policy 5 (1) (2023): 123–140. https://doi.org/10.1080/12345678.2023.1234567.

[59] Rodan, Garry. “The Internet and Political Control in Singapore.” Political Science Quarterly 113, no. 1 (1998): 63–89. https://doi.org/10.2307/2657651.

[60] Gomez, James. “Maintaining One-Party Rule in Singapore with the Tools of Digital Authoritarianism.” Kyoto Review of Southeast Asia, September 1, 2022. https://kyotoreview.org/issue-33/one-party-rule-in-singapore-tools-of-digital-authoritarianism/.

[61] Zhang, Weiyu. “Redefining Youth Activism through Digital Technology in Singapore.” International Communication Gazette 75, no. 3 (February 6, 2013): 253–70. https://doi.org/10.1177/1748048512472858.

[62] Rød, Espen Geelmuyden, and Nils B Weidmann. “Empowering Activists or Autocrats? The Internet in Authoritarian Regimes.” Journal of Peace Research 52, no. 3 (February 13, 2015): 338–51. https://doi.org/10.1177/0022343314555782.

[63] Seng, Daniel. Regulation of the interactive Digital Media Industry in Singapore, January 2008. https://ses.library.usyd.edu.au/handle/2123/2350.

[64] Tan, Netina. “Digital Learning and Extending Electoral Authoritarianism in Singapore.” Democratization 27, no. 6 (June 2, 2020): 1073–91. https://doi.org/10.1080/13510347.2020.1770731.

[65] Smart Nation Singapore. “National AI Strategy.” Smart Nation Singapore, January 12, 2026. https://www.smartnation.gov.sg/initiatives/national-ai-strategy/.

[66] Goode, Kayla, Heeu Millie Kim, and Melissa Deng. “Examining Singapore’s Ai Progress.” Center for Security and Emerging Technology, June 9, 2023. https://cset.georgetown.edu/publication/examining-singapores-ai-progress/.

[67] Areo, Gideon. “AI Governance: The Implications of Autonomous Decision.” ResearchGate, July 2025. https://www.researchgate.net/publication/393861405_AI_Governance_The_Implications_of_Autonomous_Decision-_Makers_in_Government.

[68] Infocomm Media Development Authority. “Artificial Intelligence in Singapore.” Infocomm Media Development Authority, September 11, 2025. https://www.imda.gov.sg/about-imda/emerging-technologies-and-research/artificial-intelligence.

[69] Allen & Gledhill. “Singapore Launches New Model AI Governance Framework for Agentic AI.” Allen & Gledhill, February 13, 2026. https://www.allenandgledhill.com/sg/perspectives/articles/32313/sgkh-singapore-launches-new-model-ai-governance-framework-for-agentic-ai.

[70] Leck, Andy, and Ren Jun Lim. “Singapore: Governance Framework for Agentic AI Launched: Insight.” Baker McKenzie, January 29, 2026. https://www.bakermckenzie.com/en/insight/publications/2026/01/singapore-governance-framework-for-agentic-ai-launched.

[71] Slater, Dan. “Iron Cage in an Iron Fist: Authoritarian Institutions and the Personalization of Power in Malaysia.” Comparative Politics 36, no. 1 (October 2003): 81–101. https://doi.org/10.2307/4150161.

[72] Rahim, Lily Zubaidah, and Michael D. Barr. “The Limits of Authoritarian Governance in Singapore’s Developmental State.” SpringerLink, February 25, 2019. https://link.springer.com/book/10.1007/978-981-13-1556-5.

[73] Leong, Ho Khai. “Citizen Participation and Policy Making in Singapore: Conditions and Predicaments.” Asian Survey 40, no. 3 (May 1, 2000): 436–55. https://doi.org/10.2307/3021155.

[74] Baharudin, Hariz. “Police’s Ability to Use Tracetogether Data Raises Questions on Trust: Experts.” The Straits Times, January 6, 2021. https://www.straitstimes.com/singapore/politics/polices-ability-to-use-tracetogether-data-raises-questions-on-trust-experts.

[75] Filgueiras, Fernando. “Artificial Intelligence Policy Regimes: Comparing Politics and Policy to National Strategies for Artificial Intelligence.” Global Perspectives 3, no. 1 (2022). https://doi.org/10.1525/gp.2022.32362. See also Ong, Justin Guang-Xi. “Budget 2026: Singapore to Set up National Ai Council, Chaired by PM Wong.” Channel News Asia, February 12, 2026. https://www.channelnewsasia.com/singapore/budget-2026-national-artificial-intelligence-council-ai-lawrence-wong-5925886.

[76] Woo, Jun Jie. Technology and Governance in Singapore’s Smart Nation Initiative, May 2018. https://ash.harvard.edu/wp-content/uploads/2024/02/282181_hvd_ash_paper_jj_woo.pdf.

[77] Chee, Kenny. “Tracetogether: Vivian Regrets Anxiety Caused by His Mistake.” The Straits Times, February 2, 2021. https://www.straitstimes.com/singapore/tracetogether-vivian-regrets-anxiety-caused-by-his-mistake.

[78] European Center for Not-fot-Profit Law. “Blueprint for Rights-Centered AI in Public Participation.” ECNL, November 6, 2025. https://ecnl.org/news/blueprint-rights-centered-ai-public-participation.

[79] Freedom House. “Malaysia: Freedom in the World 2026 Country Report.” Freedom House, 2026. https://freedomhouse.org/country/malaysia/freedom-world/2026.

[80] Case, William. “Can Malaysia’s New Electoral Democracy Last?” Stratsea, May 12, 2023. https://stratsea.com/can-malaysias-new-electoral-democracy-last/.

[81] Case, William. Late Malaysian Politics: From Single Party Dominance to Multi Party Mayhem, August 2, 2021. https://rsis.edu.sg/rsis-publication/rsis/late-malaysian-politics-from-single-party-dominance-to-multi-party-mayhem/. For a primer on Barisan Nasional rule, see Zakaria, Haji Ahmad. “Malaysia: Quasi Democracy in a Divided Society.” In Democracy in Developing Countries: Asia, edited by Diamond, J. J. Linz, & S. M. Lipset 3:347–81, Boulder, CO: Lynne Rienner, 1989.

[82] Ho, Khai Leong. “Dynamics of Policy-Making in Malaysia: The Formulation of the New Economic Policy and the National Development Policy.” Asian Journal of Public Administration 14, no. 2 (December 1992): 204–27. https://doi.org/10.1080/02598272.1992.10800269.

[83] For the colonial origins of communal politics in Malaysia, see Khong, Kim Hoong. Merdeka! British Rule and the Struggle for Independence in Malaya, 1945-1957. 2nd ed. Petaling Jaya, Selangor Darul Ehsan, Malaysia: Strategic Information and Research Development Centre, 2025.

[84] Weiss, Meredith L. “Is Malaysian Democracy Backsliding or Merely Staying Put?” Asian Journal of Comparative Politics 9, no. 1 (November 21, 2022): 9–24. https://doi.org/10.1177/20578911221136066.

[85] Said, Farlina, and Farah Nabilah. “Future of Malaysia’s AI Governance.” Institute of Strategic and International Studies, December 24, 2024. https://www.isis.org.my/2024/12/09/future-of-malaysias-ai-governance/.

[86] Center for Independent Journalism. Freedom of Expression in Malaysia: Joint Stakeholder Report to the United Nations Universal Periodic Review for the 45th Session of the UPR Working Group 2018 - 2023, 2023. https://cijmalaysia.net/wp-content/uploads/2023/10/FINAL-2-UPR-FOR-REPORT.pdf.

[87] Lemiere, Sophie. “Should AI Convert to Islam?” The Diplomat, January 21, 2025. https://thediplomat.com/2025/01/should-ai-convert-to-islam/.

[88] Malaysiakini. “After Defeat in Sabah, Harapan Leaders Warn of Nationwide Backlash.” Malaysiakini, November 30, 2025. https://www.malaysiakini.com/news/762163.

[89] UNESCO. “Country Profile Capturing the Sociotechnical Landscape of AI in Malaysia, Drawing from the Completed Readiness Assessment Methodology (RAM).” UNESCO.org, 2026. https://www.unesco.org/ethics-ai/en/malaysia.

[90] UNESCO. “Malaysia: Artificial Intelligence Readiness Assessment Report.” UNESCO.org, 2025. https://unesdoc.unesco.org/ark:/48223/pf0000395618.

[91] Chu, C. Y., Juin-Jen Chang, and Chang-Ching Lin. “Why Does Ai Hinder Democratization?” Proceedings of the National Academy of Sciences 122, no. 19 (May 2, 2025): 1–10. https://doi.org/10.1073/pnas.2423266122.

[92] Kaur, Dashveenjit. “Malaysia Is Rushing into AI Faster than Anyone. Its Governance Gap Is the Price.” TechWire Asia, April 22, 2026. https://techwireasia.com/2026/04/ai-governance-gap-malaysia/.

| Comments in Chronological order (0 total comments) | |

| Report Abuse |

| Contact Us | About Us | Donate | Terms & Conditions |

|

All Rights Reserved. Copyright 2002 - 2026